The question “will AI take your job?” is the wrong question. Not because the answer is no — it may well be yes, in whole or in part, sooner than feels comfortable. The framing assumes a binary that does not match how technological displacement actually works. It produces exactly the wrong response: either panic or denial, neither of which is useful. Many are asking, will AI take your job? The implications are profound.

The right question is: which part of what you do will AI do better than you within five years, which part will it augment, and which part requires something that AI structurally cannot replicate? That is a harder question to answer. It requires honest assessment rather than reassurance-seeking. But it is the only question that leads anywhere productive.

As we contemplate the future, the question on everyone’s mind is: will AI take your job? It’s essential to analyze which part of what you do will be impacted.

The errors in the current public understanding

As we navigate these changes, the question remains: will AI take your job? This guide aims to illuminate the complexities involved.

The public conversation about AI and employment contains two dominant errors that push in opposite directions and cancel each other out in a way that produces collective inaction.

WIl AI take your job and will you panic?

When considering the future of work, it is crucial to explore whether will AI take your job? This concern resonates across various industries.

The first error is catastrophism without specificity. Predictions that AI will eliminate 30, 40, or 50 percent of jobs within a decade generate alarm without actionable information. They are probably correct in aggregate and almost entirely useless at the individual level, because they say nothing about which jobs, on what timeline, through which mechanisms, and with what alternatives available. Alarm without map is not warning. It is noise.

Understanding AI’s capabilities brings us back to the fundamental question: will AI take your job? Analyzing tasks helps clarify this concern.

The discourse often leads to the underlying fear: will AI take your job? It’s a question that merits careful consideration rather than mere speculation.

The second error is the historical analogy deployed as reassurance. The argument runs: we have always feared technological unemployment — the Luddites feared the loom, workers feared the car assembly line, typists feared the word processor. Employment always recovered and new jobs emerged that no one predicted. While this is historically accurate, it also risks being misleading. It assumes that the speed, breadth, and cognitive depth of AI displacement will produce the same recovery pattern as previous technological transitions. This assumption has not been tested.

What is actually being replaced — and what is not

Understanding AI’s capabilities brings us back to the fundamental question: will AI take your job? Analyzing tasks helps clarify this concern.

Tasks involving contextual judgment in novel situations, ethical reasoning with genuine stakes, trust-based human relationships, and physical world navigation in unpredictable environments are considerably harder to automate. These tasks are not impossible to automate, but they are hard and will take longer to address.

AI displaces tasks, not jobs — at least initially. A job is a bundle of tasks, and the displacement is occurring unevenly within that bundle. Tasks involving pattern recognition, information synthesis, routine text generation, data classification, and structured decision-making under well-defined parameters are already being done faster, cheaper, and in many cases better by AI systems. That is not a future scenario. It is the current situation.

In this evolving landscape, one must ask, will AI take your job? The answer lies in recognizing the specific tasks that AI can perform.

Tasks involving contextual judgment in novel situations, ethical reasoning with genuine stakes, trust-based human relationships, physical world navigation in unpredictable environments, and — critically — the integration of tacit knowledge that has never been made explicit and therefore cannot be trained on, are considerably harder to automate. Not impossible. Hard. And harder on a shorter timeline.

As we navigate these changes, the question remains: will AI take your job? This guide aims to illuminate the complexities involved.

The professions most immediately exposed are not the ones that were predicted to be safe because they required education and expertise. Radiologists, junior lawyers, financial analysts, medical diagnosticians, code reviewers, content producers, translators, and many middle-management functions are all in the zone of substantial near-term disruption. These knowledge workers, often highly educated and well-compensated, historically occupied the category of “safe from automation.” That category no longer exists.

The professions most vulnerable must confront the reality: will AI take your job? Understanding this can inform career paths moving forward.

The people who will not be replaced

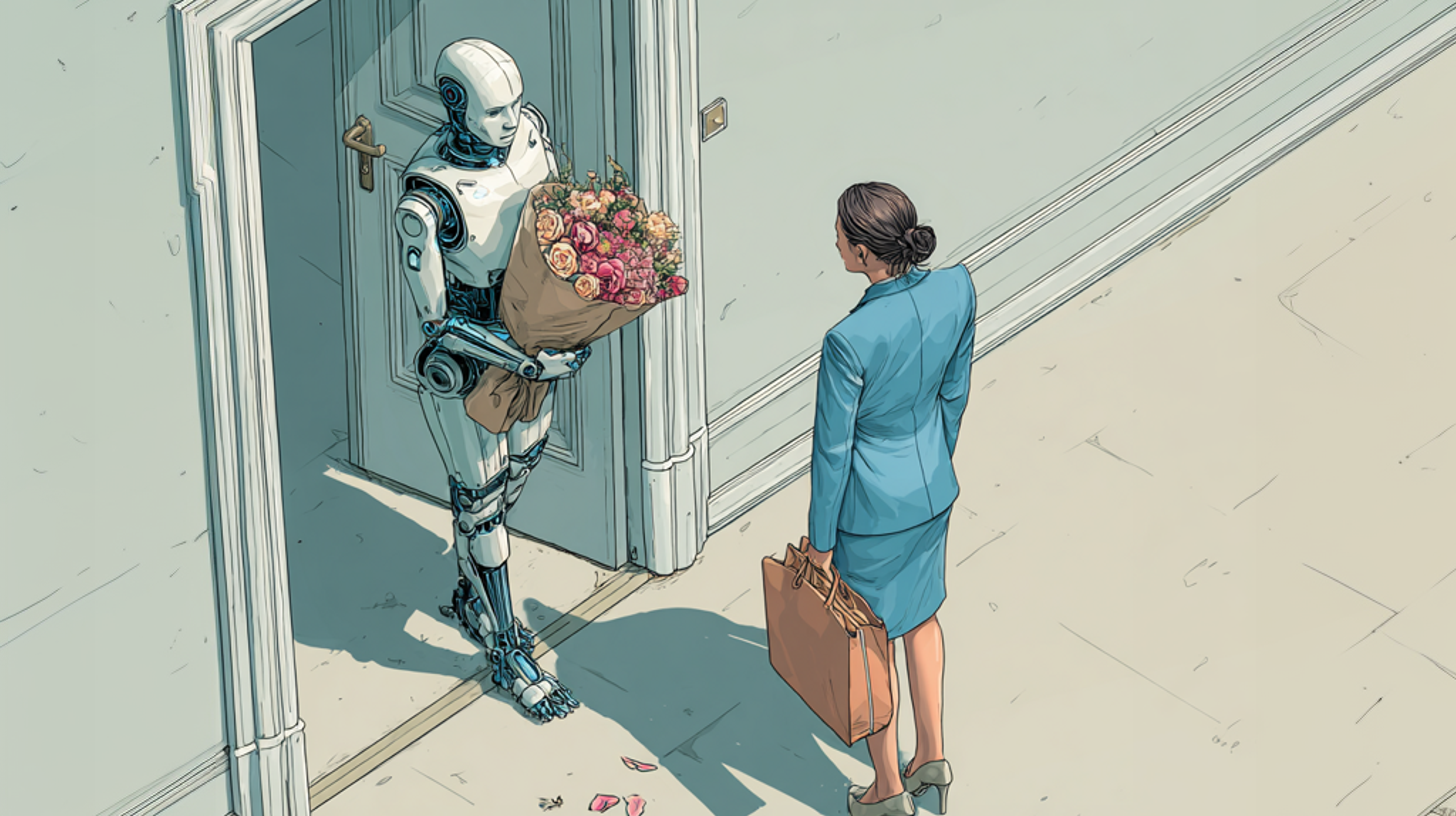

There is a pattern in the work that survives technological displacement, and it is consistent across transitions: the work that survives is work where the human presence is itself the value, not merely the delivery mechanism for the value. A therapist is not valuable because she synthesises psychological research. AI can do that. She is valuable because she is a specific human being in a room with a specific other human being, and that relational reality is doing most of the therapeutic work. A leader is not valuable because she knows more than her team. AI knows more than every team. She is valuable because of the judgment, accountability, and trust that flows from human authority in human contexts.

In the end, it’s all about resilience and adaptation to the question: will AI take your job? Being proactive can lead to new opportunities.

More specifically: the people who will be least displaced are those who learn to work with AI as a genuine cognitive partner — not as a search engine, not as an autocomplete tool, but as a thinking collaborator that extends their range and accelerates their output. This is what extended thinking means in practice. It is not about AI doing your thinking for you. It is about AI doing the part of your thinking that is routine, derivative, or synthesis-based, so that you can concentrate your cognitive resources on the part that requires genuine judgment, originality, or contextual wisdom.

The person who learns this will be capable of output that exceeds what either they alone or AI alone can produce. The person who refuses to learn it — out of principle, fear, or simple inertia — will find that their output is matched and then exceeded by someone who has learned it. This is not a metaphor. It is already happening in legal drafting, medical imaging interpretation, financial modelling, and software development. The gap between AI-augmented and non-augmented practitioners is measurable and growing.

When evaluating the future, one must confront the daunting question: will AI take your job? Preparing for this inevitability is crucial.

Why politics is not addressing this — and why that matters

The political failure to engage seriously with AI-driven labour market transformation is not accidental. It reflects the structural weaknesses of contemporary democratic politics discussed elsewhere in this series: the preference for the immediate and emotionally resonant over the complex and consequential, the electoral incentive to avoid delivering uncomfortable messages to large groups of voters, and the cognitive distance between the pace of technological change and the pace of democratic deliberation.

AI displacement is a problem that will become politically impossible to ignore at exactly the point when it is too late for proactive policy to meaningfully mitigate. The transition costs — retraining, social support, educational restructuring, regulatory frameworks — are front-loaded. The political incentive to pay them is back-loaded. This mismatch is not a failure of political will. It is a structural feature of the system, and it produces predictable outcomes: under-investment in transition support, regulatory frameworks that lag technology by a decade, and public anxiety that is validated by politicians but not addressed by policy.

This discourse around labor is incomplete without addressing: will AI take your job? Engaging with the issue is imperative now.

What makes this particularly consequential is the speed. Previous technological transitions unfolded over decades, giving labour markets, education systems, and social institutions time to adapt — imperfectly and unequally, but eventually. The current transition is faster. The models that will be economically significant in 2027 are already in development or deployment today. The educational systems preparing people for those jobs are operating on curricula designed five to ten years ago. The gap is not closing. It is widening.

Ultimately, the critical question looms: will AI take your job? This question drives understanding and preparation for the future.

Sign-up for our coming Gadvisory

A key compont in answering will AI take your job; The discipline of extended thinking

Thus, the challenge is clear: will AI take your job? Embracing extended thinking is essential to navigate this landscape.

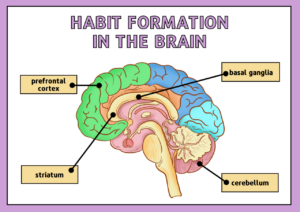

Extended thinking — the deliberate practice of holding a complex problem open longer than is comfortable, resisting premature resolution, and bringing multiple analytical frameworks to bear before concluding — is the cognitive skill that AI cannot replicate and that the current moment most urgently requires. Not because it is uniquely human in some philosophical sense, but because it is the skill that allows humans to use AI well rather than be replaced by it.

AI systems, including the most sophisticated current models, are optimised for response generation. They are not, at present, optimised for the kind of sustained, recursive, genuinely uncertain reasoning that the hardest problems require. They can accelerate and enrich that reasoning when a human is conducting it. They cannot replace it, because the reasoning requires a perspective — a located, committed, morally accountable point of view — that AI does not have.

It is vital to ask: will AI take your job? The exploration of this question is critical for professionals today.

The practical implication is straightforward: the most valuable thing a professional can do right now is not to learn how to use AI tools (though that is necessary). It is to deepen the capacities that AI cannot replicate — judgment, ethical reasoning, relational trust, and the willingness to stay with genuinely hard problems long enough to understand them. AI will do the rest faster than you can. The question is whether you are directing it toward something worth doing.

As we move forward, we must grapple with the question: will AI take your job? Being proactive can reshape the narrative.

As we summarize, keep in mind the essential question: will AI take your job? This reflection is crucial for future planning.

The Explanatorium — key concepts in this piece: Will AI take your job?

In conclusion, the pressing question remains: will AI take your job? Understanding this will guide our response to change.

In conclusion, the pressing question remains: will AI take your job? Understanding this will guide our response to change.

The round up and the back-up for Will AI take your job

Task displacement vs. job displacement: AI displaces task bundles within jobs before it replaces jobs entirely — the disruption is uneven and job-specific. The historical analogy caveat: Previous automation displaced physical/mechanical tasks and created new cognitive-task jobs. AI displaces cognitive tasks — the same category. The recovery pattern may not repeat.

Augmentation vs. replacement: The critical variable is whether a worker uses AI as a cognitive partner (augmentation) or competes with it on its own terms (replacement). Extended thinking: The deliberate practice of sustained, recursive reasoning under genuine uncertainty — the capacity AI accelerates but cannot replicate.

Political mismatch: AI displacement costs are front-loaded; political incentives to address them are back-loaded. The result is systematic under-investment in transition. Key reading: Daron Acemoglu & Simon Johnson, Power and Progress (2023) · Erik Brynjolfsson & Andrew McAfee, The Second Machine Age (2014) · David Autor on labour market polarisation · Ethan Mollick, Co-Intelligence (2024).

Can AI Make You Smarter? The Question Everyone is Asking Wrong

Lifestyle platforms from Leisuretalk.net

Ultimately, the critical question looms: will AI take your job? This question drives understanding and preparation for the future.

As we summarize, keep in mind the essential question: will AI take your job? This reflection is crucial for future planning.